Visual AI Takes Quality Control to a New Level

Comprehensive guide for automated visual industrial quality control with AI and Machine Learning. From image recognition to anomaly detection.

Have you heard about The Big Hack? The Big Hack story was about a tiny probe (small chip) inserted on computer motherboards by Chinese manufacturing companies. Attackers then could infiltrate any server workstation containing these motherboards, many of which were installed in large US-based companies and government agencies. The thing is, the probes were so small, and the motherboards so complex, that they were almost impossible to spot by the human eye. You can take this post as a guide to help you navigate the latest trends of AI in the industry with a primary focus on AI-based visual inspection systems.

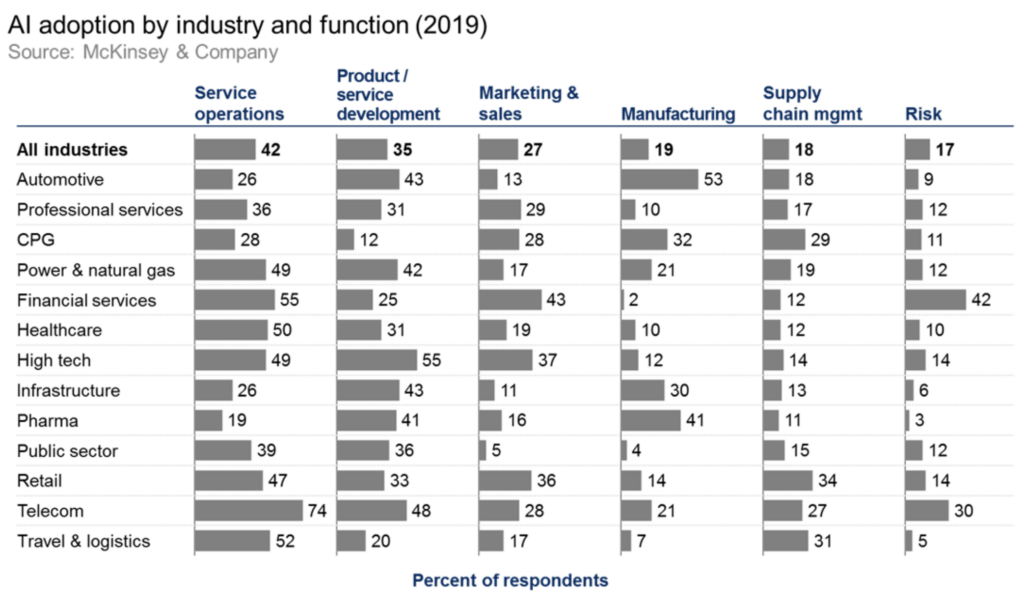

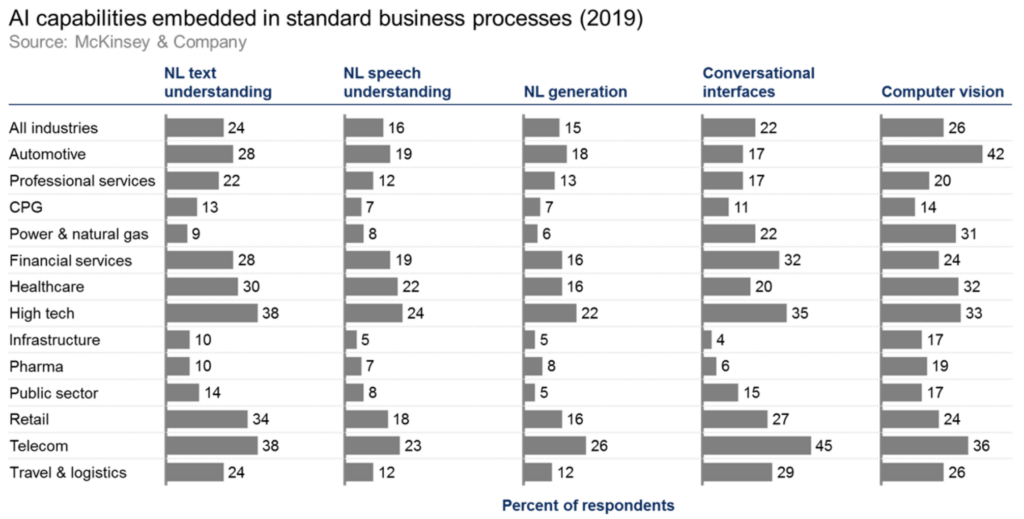

AI Adoption by Companies Worldwide

Let’s start with some interesting stats and news. The expansion of AI and Machine Learning is becoming common across numerous industries. According to this report by Stanford University, AI adoption is increasing globally. More than 50 % of respondents said their companies were using AI, and the adoption growth was greatest in the Asia-Pacific region. Some people refer to the automation of factory processes, including digitalization and the use of AI, as the Fourth Industrial Revolution (and so-called Industry 4.0).

The data show that the Automotive industry is the largest adopter of AI in manufacturing, using heavily machine learning, computer vision, and robotics.

Other industries, such as Pharma or Infrastructure, are using computer vision in their production lines as well. Financial services, on the other hand, are using AI mostly in operations, marketing & sales (with a focus on Natural Language Processing – NLP).

The MIT Technology Review cited the statement of a leading artificial intelligence expert Andrew Ng, who has been helping tech giants like Google implement AI solutions, that factories are AI’s next frontier. For example, while it would be difficult to inspect parts of electronic devices with our eyes, a cheap camera of the latest Android or iPhone can provide high-resolution images that can be connected to any industrial system.

Adopting AI brings major advantages, but also potential risks that need to be mitigated. It is no surprise that companies are mainly concerned about the cybersecurity of such systems. Imagine you could lose a billion dollars if your factory stopped working (like Honda in this case). Other obstacles are potential errors in machine learning models. There are techniques on how to discover such errors, such as the explainability of AI systems. As for now, the explainability of AI is a concern of only 19 % of companies so there is space to improve. Getting insight from the algorithms can improve the processes and quality of the products. Other than security, there are also political & ethical questions (e.g., job replacement or privacy) that companies are worried about.

This survey by McKinsey & Company brings interesting insights into Germany’s industrial sector. It demonstrates the potential of AI for German companies in eight use cases, one of which is automated quality testing. The expected benefit is a 50% productivity increase due to AI-based automation. Needless to say, Germany is a bit ahead with the AI implementation strategy – there are already several plans made by German institutions to create standardised AI systems that will have better interoperability, certain security standards, quality criteria, and test procedures.

Highly developed economies like Germany, with a high GDP per capita and challenges such as a quickly ageing population, will increasingly need to rely on automation based on AI to achieve GDP targets.

McKinsey & Company

Another study by PwC predicts that the total expected economic impact of AI in the period until 2030 will be about $15.7 trillion. The greatest economic gains from AI are expected in China (26% higher GDP in 2030) and North America.

What is Visual Quality Control?

The human visual system is naturally very selective in what it perceives, focusing on one thing at a time and not actually seeing the whole image (direct vs. peripheral view). The cameras, on the other hand, see all the details, and with the highest resolution possible. Therefore, stories like The Big Hack show us the importance of visual control not only to ensure quality but also safety. That is why several companies and universities decided to develop optical inspection systems engaging machine learning methods able to detect the tiniest difference from the reference board.

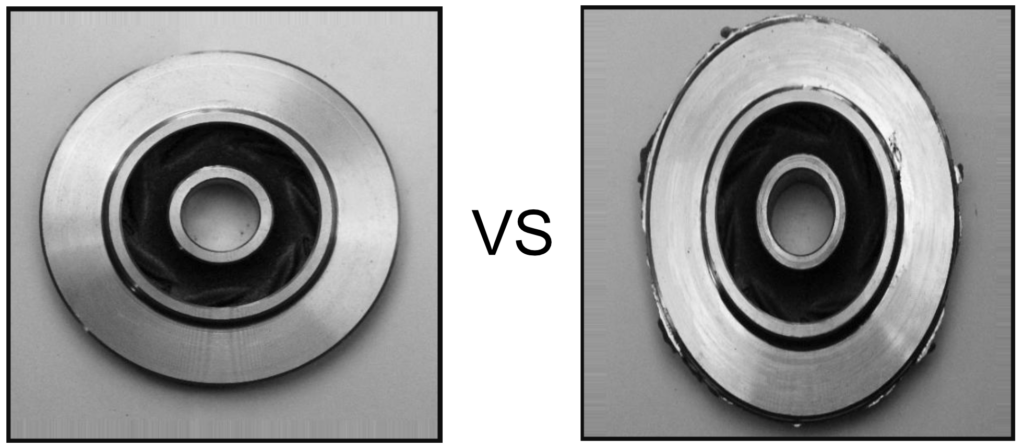

In general, visual quality control is a method or process to inspect equipment or structures to discover defects, damages, missing parts, or other irregularities in production or manufacturing. It is an important method of confirming the quality and safety of manufactured products. Optical inspection systems are mostly used for visual quality control in factories and assembly lines, where the control would be hard or ineffective with human workers.

What Are the Main Benefits of Automatic Visual Inspection?

Here are some of the essential aspects and reasons, why automatic visual inspection brings a major advantage to businesses:

- The human eye is imprecise – Even though our visual system is a magnificent thing, it needs a lot of “optimization” to be effective, making it prone to optical illusions. The focused view can miss many details, our visible spectrum is limited (380–750 nm), and therefore unable to capture NIR wavelength (source). Cameras and computer systems, on the other hand, can be calibrated to different conditions. Cameras are more suitable for highly precise analyses.

- Manual checking – Manual checking of the items one by one is a time-consuming process. Smart automation allows processing and checking more items and faster. It also reduces the number of defective items that are released to customers.

- The complexity – Some assembly lines can produce thousands of various products of different shapes, colours, and materials. For humans, it can be very difficult to keep track of all possible variations.

- Quality – Providing better and higher quality products by reducing defective items and getting insights into the critical parts of the assembly line.

- Risk of damage – Machine vision can reduce the risk of item damage and contamination by a person.

- Workplace safety – Making the work environment safer by inspecting it for potentially dangerous actions (e.g. detection of protection wearables as safety helmets in construction sites), inspection in radioactive or biohazard environments, detection of fire, covid face masks, and many more.

- Saving costs – Labour work can be pretty expensive in the Western world.

For example, the average Quality control inspector salary in the US is about 40k USD. Companies consider numerous options when saving costs, such as moving the factories to other countries, streamlining the operations, or replacing the workers with robots. And as I said before, this goes hand in hand with some political & ethical questions. I think the most reasonable solution in the long term is the cooperation of workers with robotic systems. This will make the process more robust, reliable, and effective. - Costs of AI systems – Sooner or later, modern technology and automation will be common in all companies (Startups as well as enterprise companies). The adoption of automatic solutions based on AI will make the transition more affordable.

Where is Visual Quality Control Used?

Let’s take a look at some of the fields where the AI visual control helps:

- Cosmetics – Inspection of beauty products for defects and contaminations, colour & shape checks, controlling glass or plastic tubes for cleanliness and rejecting scratched pieces.

- Pharma & Medical – Visual inspection for pharmaceuticals: rejecting defective and unfilled capsules or tablets or the filling level of bottles, checking the integrity of items; or surface imperfections of medical devices. High-resolution recognition of materials.

- Food Industry and Agriculture – Food and beverage inspection for freshness. Label print/barcode/QR code control of presence or position.

A great example of industrial IoT is this story about a Japanese cucumber farmer who developed a monitoring system for quality check with deep learning and TensorFlow.

- Automotive – Examination of forged metallic parts, plastic parts, cracks, stains or scratches in the paint coating, and other surface and material imperfections. Monitoring quality of automotive parts (tires, car seats, panels, gears) over time. Engine monitoring and predictive autonomous maintenance.

- Aerospace – Checking for the presence and quality of critical components and material, spotting the defective parts, discarding them, and therefore making the products more reliable.

- Transportation – Rail surface defects control (example), aircraft maintenance check, or baggage screening in airports – all of them require some kind of visual inspection.

- Retail/Consumer Goods & Fashion – Checking assembly line items made of plastics, polymers, wood, and textile, and packaging. Visual quality control can be deployed for the manufacturing process of the goods. Sorting imprecise products.

- Energy, Mining & Heavy Industries – Detecting cracks and damage in wind blades or solar panels, visual control in nuclear power plants, and many more.

It’s interesting to see that more and more companies choose collaborative platforms such as Kaggle to solve specific problems. In 2019, the contest by Russian company Severstal on Kaggle led to tens of solutions for the steel defect detection problem.

Steel defects [Source: Kaggle]

![Image of flat steel defects from Severstal competition. [Source: Kaggle]](https://www.ximilar.com/wp-content/uploads/2021/02/thumb76_76-1.png)

- Other, e.g. safety checks – if people are present in specific zones of the factory if they have helmets, or stopping the robotic arm if a worker is located nearby.

The Technology Behind AI Quality Control

There are several different approaches and technologies that can be used for visual inspection on production lines. The most common nowadays are using some kind of neural network model.

Neural Networks – Deep Learning

Neural Networks (NN) are computational models that accept the input data and output relevant information. To make the neural network useful (finding the weights for the connection between the neurons and layers), we need to feed the network with some initial training data.

The advantage of using neural networks is their power to internally represent training data which leads to the best performance compared to other machine learning models in computer vision. However, it brings challenges, such as computational demands, overfitting, and others.

[Un|Semi|Self] Supervised Learning

If a machine-learning algorithm (NN) requires ground truth labels, i.e. annotations, then we are talking about supervised learning. If not, then it is an unsupervised method or something in between – semi or self-supervised method. However, building an annotated dataset is much more expensive than simply obtaining data with no labels. The good news is that the latest research in Neural Networks tackles problems with unsupervised learning.

Here is the list of common services and techniques for visual inspection:

- Image Recognition – Simple neural network that can be trained for categorization or error detection on products from images. The most common architectures are based on convolution (CNN).

- Object Detection – Model able to predict the exact position (bounding box) of specific parts. Suitable for defect localization and counting.

- Segmentation – More complex than object detection, image segmentation can tell you a pixel-based prediction.

- Image Regression – Regress/get a single decimal value from the image. For example, getting the level of wear out of the item.

- Anomaly Detection – Shows which image contains an anomaly and why. Mostly done by GAN or GRAD-CAM.

- OCR – Optical Character Recognition is used for getting and reading text from images.

- Image matching – Matching the picture of the product to the reference image and displaying the difference.

- Other – There are also other solutions that do not require data at all, most of the time using some simple, yet powerful computer vision technique.

If you would like to dive a bit deeper into the process of building a model, you can check my posts on Medium, such as How to detect defects on images.

Typical Types and Sources of Data for Visual Inspection

Common Data Sources

RGB images – The most common data type and the easiest to get. A simple 1080p camera that you can connect to Raspberry Pi costs about 25$.

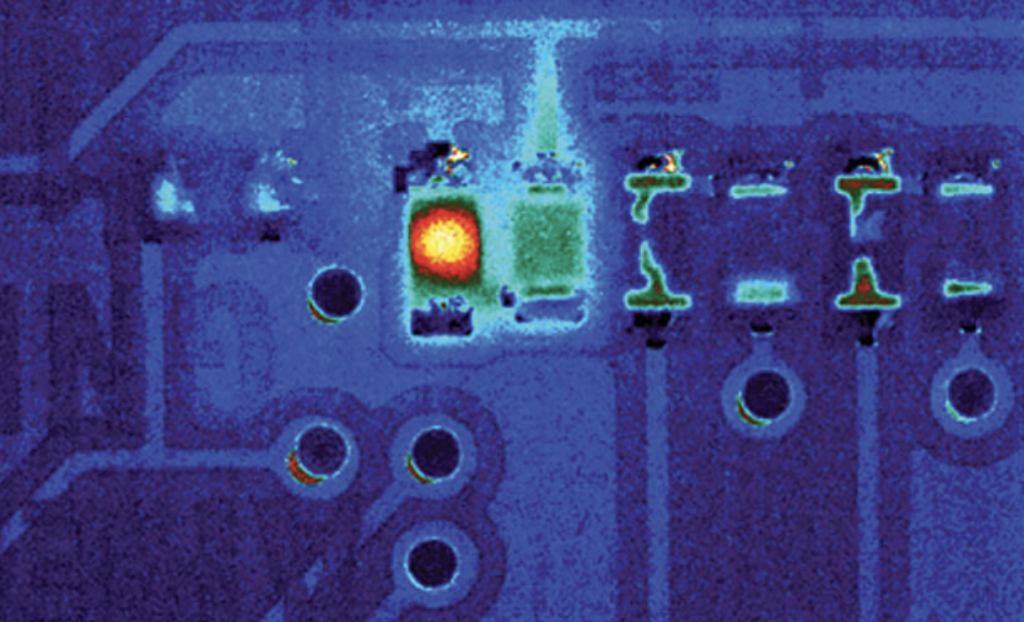

Thermography – Thermal quality control via infrared cameras, mostly used to detect flaws not visible by simple RGB cameras under the surface, gas imaging, fire prevention, and electronics behaviour under different conditions. If you want to know more, I recommend reading the articles in Quality Magazine.

3D scanning, Lasers, X-ray, and CT scans – Creating 3D models from special depth scanners gives you a better insight into material composition, surface, shape, and depth.

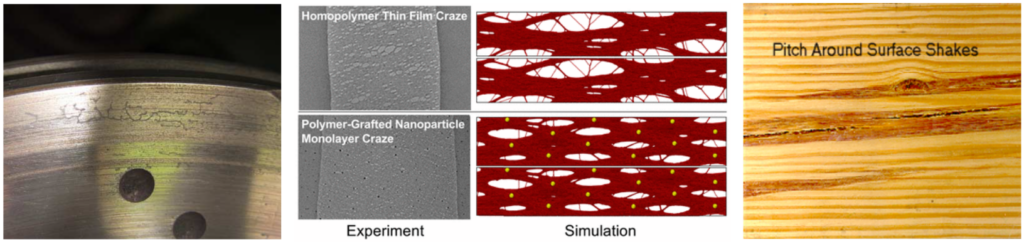

Microscopy – Due to the rapid development and miniaturization of technologies, sometimes we need a more detailed and precise view. Microscopes can be used in an industrial setting to ensure the best quality and safety of products. Microscopy is used for visual inspection in many fields, including material sciences and industry (stress fractures), nanotechnology (nanomaterial structure), or biology & medicine. There are many microscopy methods to choose from, such as stereomicroscopy, electron microscopy, opto-digital or purely digital microscopes, and others.

Common Inspection Errors

- scratches

- patches

- knots, shakes, checks, and splits in the wood

- crazing

- pitted surface

- missing parts

- label/print damage

- corrosion

- coating nonuniformity

Examples of Datasets for Visual Inspection

- Severstal Kaggle Dataset – A competition for the detection of defects on flat sheet steel.

- MVTec AD – 5000 high-resolution annotated images of 15 items (divided into defective and defect-free categories).

- Casting Dataset – Casting is a manufacturing process in which a liquid material is usually poured into a form/mould. About 7 thousand images of submersible pump defects.

- Kolektor Surface-Defect Dataset – Dataset of microscopic fractions or cracks in electrical accumulators.

- PCB Dataset – Annotated images of printed circuit boards.

AI Quality Control Use Cases

We talked about a wide range of applications for visual control with AI and machine learning. Here are three of our use cases for industrial image recognition we worked on in 2020. All these cases required an automatic optical inspection (AOI) and partial customization when building the model, working with different types of data and deployment (cloud/on-premise instance/smartphone). We are glad to hear that during the COVID-19 pandemic, our technologies help customers keep their factories open.

Our typical workflow for a customized solution is the following:

- Setup, Research & Plan: If we don’t know how to solve the problem from the initial call, our Machine Learning team does the research and finds the optimal solution for you.

- Gathering Data: We sit with your team and discuss what kind of data samples we need. If you can’t acquire and annotate data yourself, our team of annotators will work on obtaining a training dataset.

- First prototype: Within 2–4 weeks we prepare the first prototype or proof of concept. The proof of concept is a lightweight solution for your problem. You can test it and evaluate it by yourself.

- Development: Once you are satisfied with the prototype results, our team can focus on the development of the full solution. We work mostly in an iterative way improving the model and obtaining more data if needed.

- Evaluation & Deployment: If the system performs well and meets the criteria set up in the first calls (mostly some evaluation on the test dataset and speed performance), we work on the deployment. It can be used in our cloud, on-premise, or embedded hardware in the factory. It’s up to you. We can even provide a source code so your team can edit it in the future.

Use case: Image recognition & OCR for wood products

One of our customers contacted us with a request to build a system for categorization and quality control of wooden products. With Ximilar Platform we were able to easily develop and deploy a camera system over the assembly line that sorted the products into the bins. The system can identify the defective print on the products with optical character recognition technology (OCR), and the surface control of wood texture is enabled by a separate model.

The technology is connected to a simple smartphone/tablet camera in the factory and can handle tens of products per second. This way, our customer was able to reduce rework and manual inspections which led to saving thousands of USD per year. This system was built with the Ximilar Flows service.

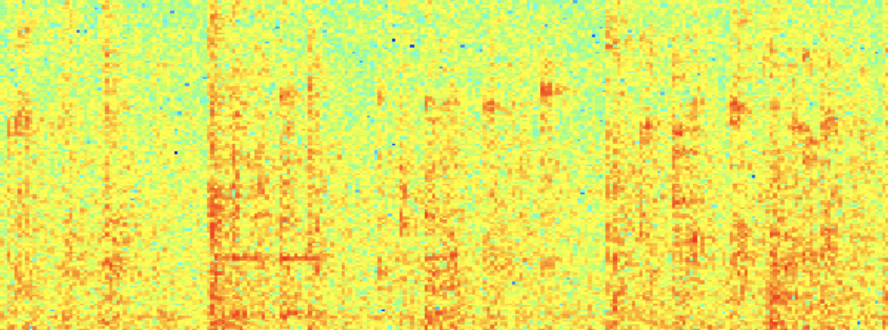

Use case: Spectrogram analysis from car engines

Another project we successfully deployed was the detection of malfunctioning engines. We did it by transforming the sound input from the car into an image spectrogram. After that, we train a deep neural network that recognises problematic car engines and can tell you the specific problem of the engine.

The good news is that this system can also detect anomalies in an unsupervised way (no need for data labelling) with the GAN technology.

Use case: Wind Turbin Blade damages from drone footage

According to Bloomberg, there is no simple way to recycle a wind turbine, and it is therefore crucial to prolong the lifespan of wind power plants. They can be hit by lightning, influenced by extreme weather, and other natural forces.

That’s why we developed for our customers a system checking the rotor blade integrity and damages working with drone video footage. The videos are uploaded to the system, and inspection is done with an object detection model identifying potential problems. There are thousands of videos analyzed in one batch, so we built a workstation (with NVidia RTX GPU cards) able to handle such a load.

Ximilar Advantages in Visual AI Quality Control

- An end-to-end and easy-to-use platform for Computer Vision and Machine Learning, with enterprise-ready features.

- Processing hundreds of images per second on an average computer.

- Train your model in the cloud and use it offline in your factory without an internet connection. Thanks to TensorFlow, you can use the model on any computer, edge device, GPU card, or embedded hardware (Raspberry Pi or NVIDIA Jetson connected to a camera). We also provide optimized CPU models on Intel devices through OpenVINO technology.

- Easily gather more data and teach models on new defects within a day.

- Evaluation of the independent dataset, and model versioning.

- A customized yet affordable solution providing the best outcome with pixel-accurate recognition.

- Advanced image management and annotation platform suitable for creating intelligent vision systems.

- Image augmentation settings that can be tuned for your problem.

- Fast machine learning models that can be connected to your industrial camera or smartphone for industrial image processing robust to lighting conditions, object motion, or vibrations.

- Great team of experts, available to communicate and help.

To sum up, it is clear that artificial intelligence and machine learning are becoming common in the majority of industries working with automation, digital data, and quality or safety control. Machine learning definitely has a lot to offer to the factories with both manual and robotic assembly lines, or even fully automated production, but also to various specialized fields, such as material sciences, pharmaceutical, and medical industry.

Are you interested in creating your own visual control system?

Tags & Themes

Related Articles

Top Clarifai Alternatives in 2026 – Find the Best Fit for Your Visual AI Needs

Clarifai shuts down July 17, 2026. See which services Ximilar replaces, where it goes further, and how to migrate fast.

How to Fine-Tune a Vision Language Model Without Writing Code

Ximilar’s no-code VLM platform lets you fine-tune small, private AI models and deploy them on any device – no ML expertise required.

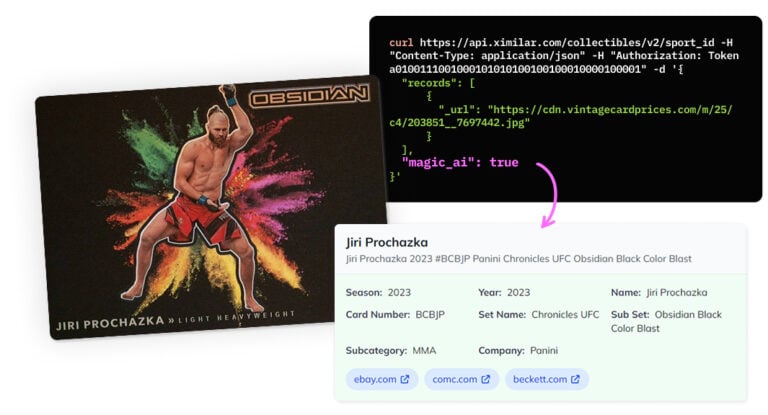

Recognize New & Rare Cards With AI Sports Card Identification

With millions of cards and variations, even the best databases miss some. We refined our sports cards recognition to identify cards even when no match exists.