Plan the Image Recognition Task

Before we start, let’s think about this.

Vision Model — “Parameter Extractor”

What we want is a model designed to extract information from images. We will focus on several parameters:

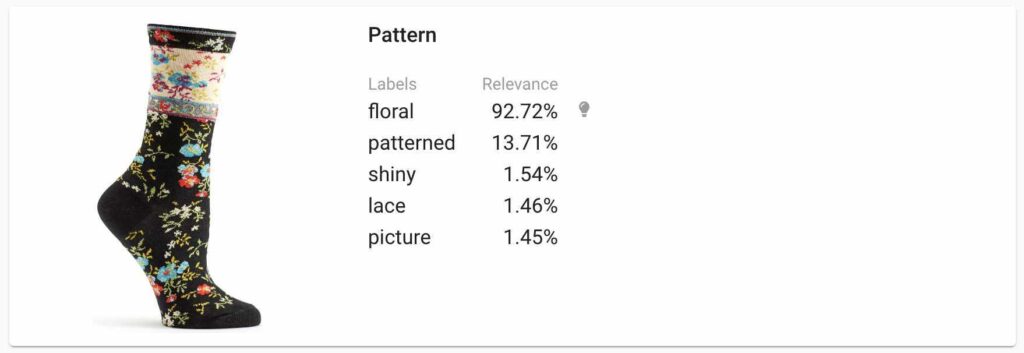

- Pattern (dotted, striped, winter, summer)

- Colour

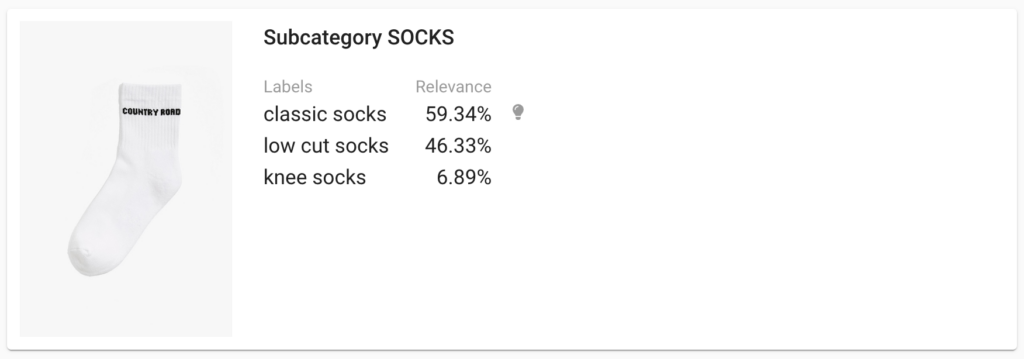

- Sock type (ankle length, quarter length, crew length)

Each customer’s image is evaluated and labelled. This provides us with information about what are the favourite colours, patterns, and types of socks of each customer. That’s great because we can now customise the next newsletter to fit your customer’s style.

At this point, matching can be as simple as adding a few rules saying that blue and orange socks go together, striped goes with dotted and so on. This, of course, is a hack that does not bring much value into the fashion field, but it will work at the beginning.

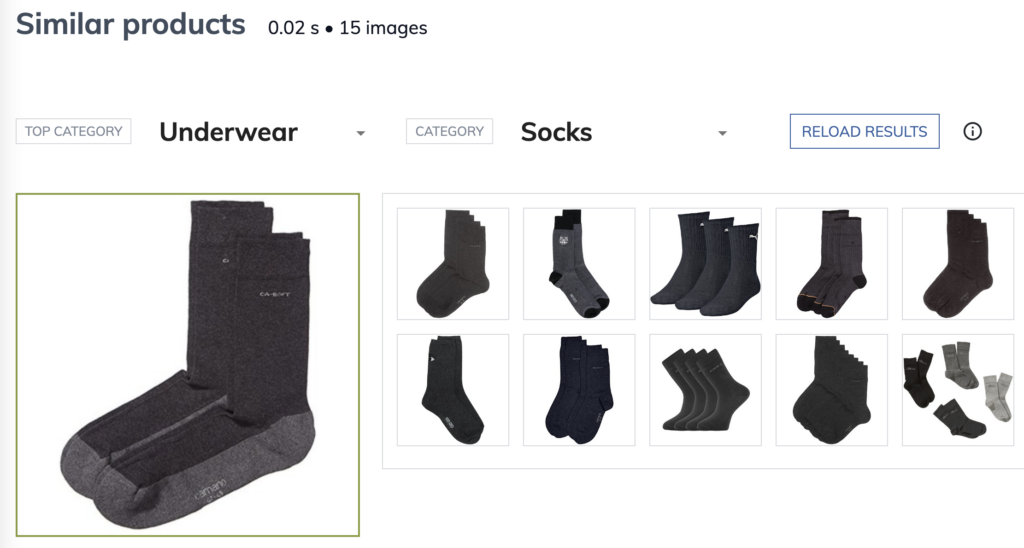

We can also align categories with our e-shop categories and recommend customers similar socks to those they already have. When you have enough images collected, we are ready to build a “Fashion advisor” model. We will also keep data extraction models to help us understand the customers and make clever suggestions.

Now we know what functions we are looking for:

- Extract colour

- Extract pattern

- Extract sock type

- Custom fashion recommender

The most important is model accuracy. There is no solution that can provide 100% accuracy because your customer’s images are going to be so much different. Reaching 80–90% accuracy is great!

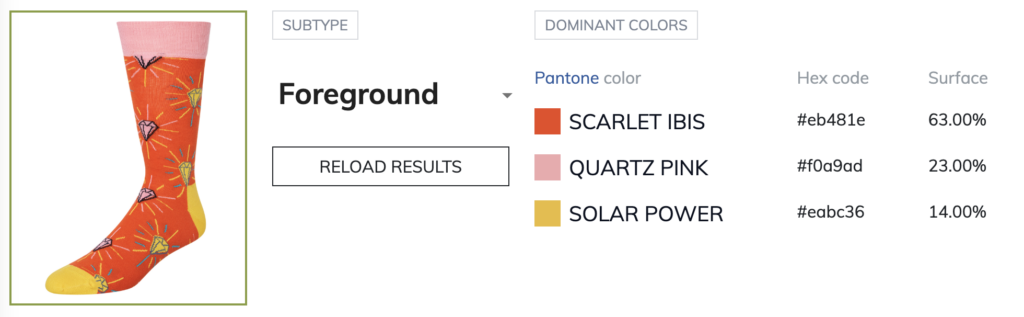

Extract Colour From Image via API

It is easy to define a colour using the general categorization model. Our Dominant colours service should work in this case. We can extract dominant colours with drag and drop via demo or extract them via API. You can ignore the background and analyse the colours of the product. We can use these colours for filtering socks from our shop site for a specific colour.