How to deploy object detection on Nvidia Jetson Nano

We developed a computer vision system for object detection, counting, and tracking on Nvidia Jetson Nano.

At the beginning of summer, we received a request for a custom project for a camera system in a factory located in Africa. The project was about detecting, counting, and visual quality control of the items on the conveyor belts in a factory with the help of visual AI. So we developed a complex system with neural networks on a small computer called Jetson Nano. If you are curious about how we did it, this article is for you. And if you need help with building similar solutions for your factory, our team and tools are here for you.

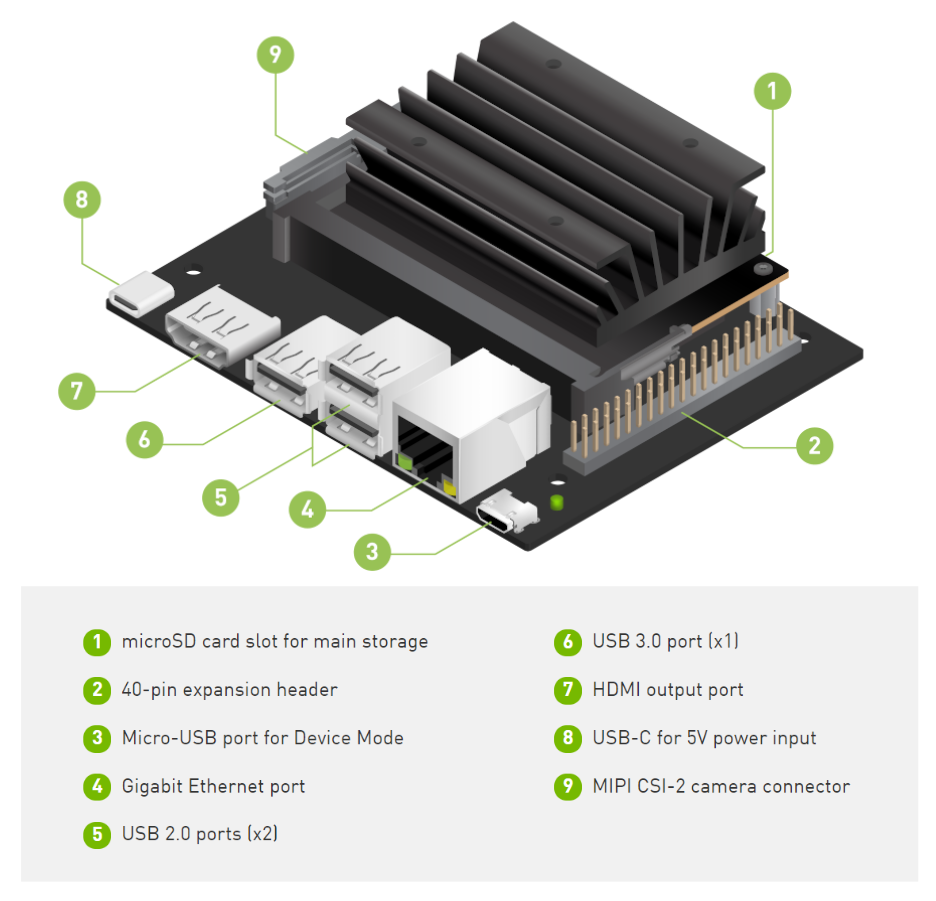

What is NVIDIA Jetson Nano?

There were two reasons why using our API was not an option. First, the factory has unstable internet connectivity. Also, the entire solution needs to run in real time. So we chose to experiment with embedded hardware that can be deployed in such an environment, and we are very glad that we found Nvidia Jetson Nano.

Jetson Nano is an amazing small computer (embedded or edge device) built for AI. It allows you to do machine learning in a very efficient way with low-power consumption (about 5 watts). It can be a part of IoT (Internet of Things) systems, running on Ubuntu & Linux, and is suitable for simple robotics or computer vision projects in factories. However, if you know that you will need to detect, recognize and track tens of different labels, choose the higher version of Jetson embedded hardware, such as Xavier. It is a much faster device than Nano and can solve more complex problems.

What is Jetson Nano good for?

Jetson is great if:

- You need a real-time analysis

- Your problem can be solved with one or two simple models

- You need a budget solution & be cost-effective when running the system

- You want to connect it to a static camera – for example, monitoring an assembly line

- The system cannot be connected to the internet – for example, because your factory is in a remote place or for security reasons

The biggest challenges in Africa & South Africa remain connectivity and accessibility. AI systems that can run in house and offline can have great potential in such environments.

Deloitte: Industry 4.0 – Is Africa ready for digital transformation?

Object Detection with Jetson Nano

If you need real-time object detection processing, use the Yolo-V4-Tiny model proposed in this repository AlexeyAB/darknet. And other more powerful architectures are available as well. Here is a table of what FPS you can expect when using Yolo-V4-Tiny on Jetson:

| Architecture | mAP @ 0.5 | FPS |

| yolov4-tiny-288 | 0.344 | 36.6 |

| yolov4-tiny-416 | 0.387 | 25.5 |

| yolov4-288 | 0.591 | 7.93 |

After the model’s training is completed, the next step is the conversion of the weights to the TensorRT runtime. TensorRT runtimes make a substantial difference in speed performance on Jetson Nano. So train the model with AlexeyAB/darknet and then convert it with tensorrt_demos repository. The conversion has multiple steps because you first convert darknet Yolo weights to ONNX and then convert to TensorRT.

There is always a trade-off between accuracy and speed. If you do not require a fast model, we also have a good experience with Centernet. Centernet can achieve a really nice mAP with precise boxes. If you run models with TensorFlow or PyTorch backends, then the speed is slower than Yolo models in our experience. Luckily, we can train both architectures and export them in a suitable format for Nvidia Jetson Nano.

Image Recognition on Jetson Nano

For any image categorization problem, I would recommend using simple architecture as MobileNetV2. You can select for example the depth multiplier for mobilenet of 0.35 and image resolution 128×128 pixels. In this way, you can achieve great performance both in speed and precision.

We recommend using TFLITE backend when deploying the recognition model on Jetson Nano. So train the model with the TensorFlow framework and then convert it to TFLITE. You can train recognition models with our platform without any coding for free. Just visit Ximilar App, where you can develop powerful image recognition models and download them for offline usage on Jetson Nano.

Recommended camera and utilities

Jetson Nano is simple but powerful hardware. However, it is not as powerful as your laptop or desktop computer. That’s why analyzing 4k images on Jetson will be very slow. I would recommend using max 1080p camera resolution. We used a camera by Raspberry PI, which works very well on Jetson and installation is easy!

I should mention that with Jetson Nano, you can come across some temperature issues. Jetson is normally shipped with a passive cooling system. However, if this small piece of hardware should be in the factory, and run stable for 24 hours, we recommend using an active cooling system like this one. Don’t forget to run the next command so your fan on Jetson starts working:

sudo jetson_clocks --fan

Installation steps & tips for development

When working with Jetson Nano, I recommend following guidelines by Nvidia, for example here is how to install the latest TensorFlow version. There is a great tool called jtop, which visualizes hardware stats as GPU frequency, temperature, memory size, and much more:

Remember, the Jetson has shared memory with GPU. You can easily run out of 4 GB when running the model and some programs alongside. If you want to save more than 0.5 GB of memory on Jetson, then run the Ubuntu on LXDE desktop environment/interface. The LXDE is more lightweight than the default Ubuntu environment. To increase memory, you can also create a swap file. But be aware that if your project requires a lot of memory, it can eventually destroy your microSD card. More great tips and hacks can be found on JetsonHacks page.

For improvement of the speed of Jetson, you can also try these two commands, which will set the maximum power input and frequency:

sudo nvpmodel -m0

sudo jetson_clocksWhen using the latest image for Jetson, be sure that you are working with the right OpenCV versions of the library. For example, some older tracking algorithms like MOSSE or KCF from OpenCV require a specific version. For some tracking solutions, I recommend looking on PyImageSearch website.

Developing on Jetson Nano

The experience of programming challenging projects, exploring new gadgets, and helping our customers is something that deeply satisfies us. We are looking forward to trying other hardware for machine learning such as Coral from Google, Raspberry Pi, or Intel Movidius for Industry 4.0 projects.

Most of the time, we are developing a machine learning API for large e-commerce sites. We are really glad that our platform can also help us build machine learning models on devices running in distant parts of the world with no internet connectivity. I think that there are many more opportunities for similar projects in the future.

Tags & Themes

Related Articles

Ximilar Now Combines Visual and Text-to-Image Search

E-commerce retailers using our search engine now have access to multilingual text search as well.

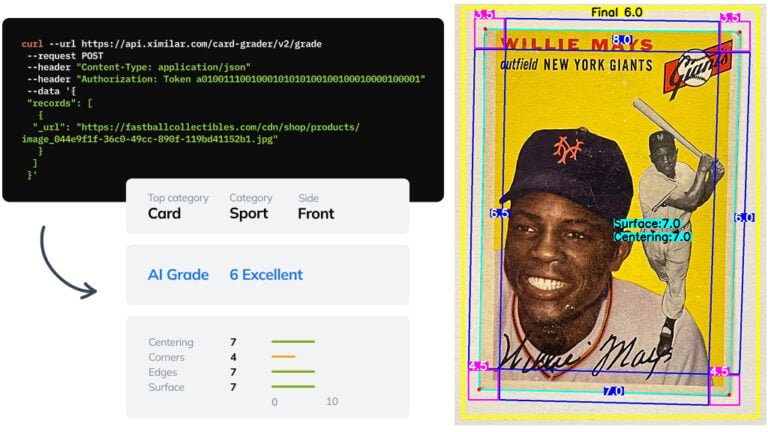

Automate Card Grading With AI via API – Step by Step

A guide on how to easily connect to our trading card grading and condition evaluation AI via API.

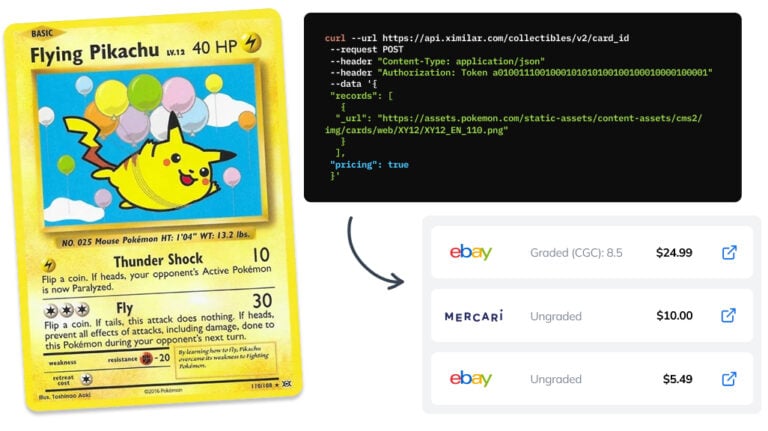

How to Automate Pricing of Cards & Comics via API

A step-by-step guide on how to easily get pricing data for databases of collectibles, such as comic books, manga, trading card games & sports cards.